System Overview

System OverviewAbstract

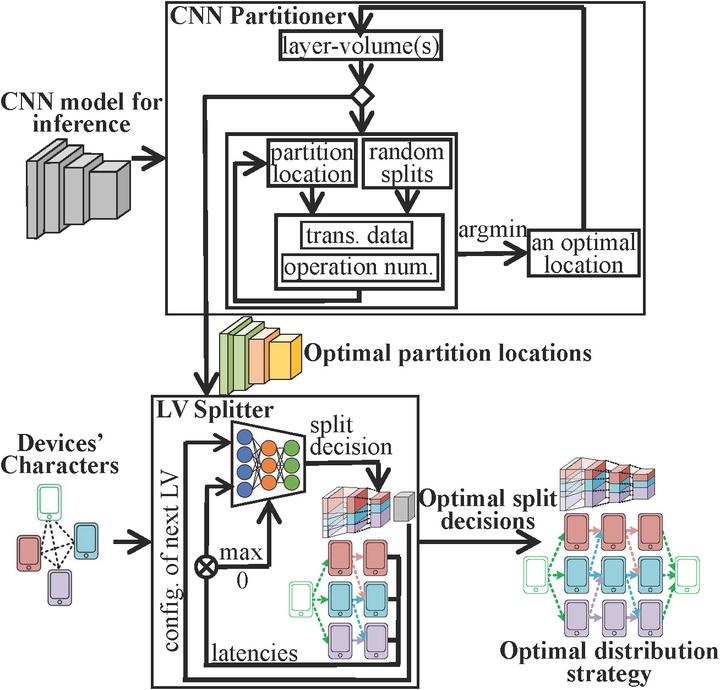

As the number of edge devices with computing resources (e.g., embedded GPUs, mobile phones, and laptops) in-creases, recent studies demonstrate that it can be beneficial to col-laboratively run convolutional neural network (CNN) inference on more than one edge device. However, these studies make strong assumptions on the device conditions, and their application is far from practical. In this work, we propose a general method, called DistrEdge, to provide CNN inference distribution strategies in environments with multiple IoT edge devices. By addressing heterogeneity in devices, network conditions, and nonlinear characters of CNN computation, DistrEdge is adaptive to a wide range of cases (e.g., with different network conditions, various device types) using deep reinforcement learning technology. We utilize the latest embedded AI computing devices (e.g., NVIDIA Jetson products) to construct cases of heterogeneous devices types in the experiment. Based on our evaluations, DistrEdge can properly adjust the distribution strategy according to computing characters of the devices and the network conditions. It achieves 1.1 to 3 x speedup compared to state-of-the-art methods.